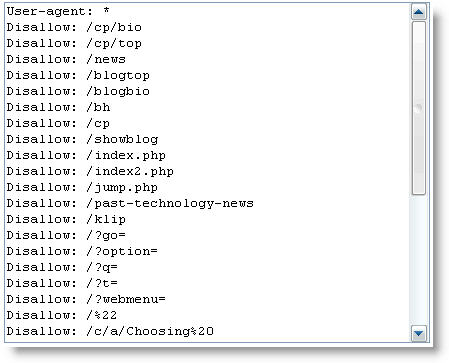

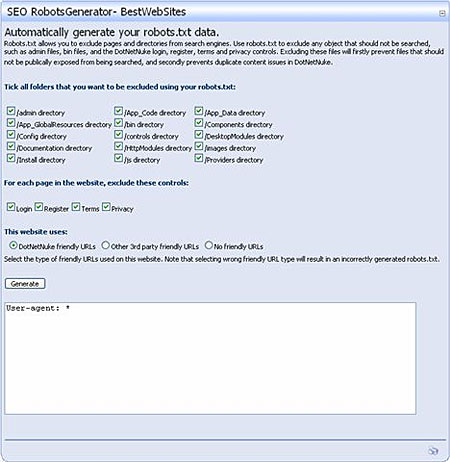

For those webmasters who have been troubled by search engine robots crawling into areas which do not need to be indexed, there’s finally a solution. Google has released its robots.txt generator file, that would enable a webmaster to create a set of instructions for the robots to follow. Assuming that we are all familiar with the ‘Google Bot’ , it’s also a real pain to keep issuing instructions to it. Although robots.txt files are not that difficult to create, still for some site owners its nothing short of a nightmare.

Therefore, to ease our dilemma Google issued this file generator. Seems like Google is determined to be on the top of everything, as it is the first time that any search engine has released file generator. It is available to all webmasters and has been placed in the Google Webmaster Tools. This tool prevents the search engines from indexing content that the webmasters wish to keep confidential.

Once the robots.txt file is created and uploaded, webmasters can use the ‘robots.txt analysis tool’ to check their robots.

One very important thing to remember is, that once the editing has been done, the file is to be uploaded in the root directory and not the sub directory of the website as it is usually done. This is because, the robots.txt files do not work on sub-directory levels. Only on root levels, will they do their designated work.

The generator will create files that Google Bot will understand and so will most of the other major robots. However, there is a possibility that some of the robots might not be able to understand these files. The robots.txt file should be considered as a request only. Which means, there may be some rouge robots who might ignore these files and still crawl into a website’s confidential files or non-indexed areas.

The release of this file puts another feather in Google’s crown, making it the undisputed online emperor.